Scaling Data with PySpark

The Architecture of Modern PySpark

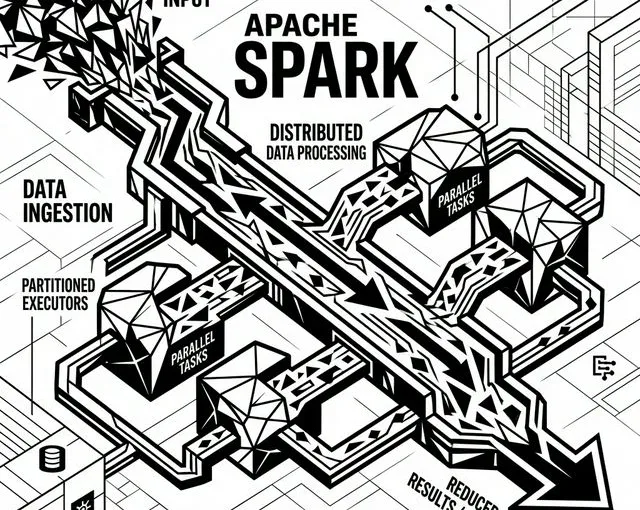

When data agencies face analytical demands pushing into terabytes, simple Pandas processing hits an unbreakable wall. Enter PySpark: the Python interface for Apache Spark.

Designed specifically around a cluster-computing framework, PySpark distributes data processing tasks concurrently across a grid of instances. By relying on in-memory computation, it executes MapReduce algorithms significantly faster than legacy disk-based Hadoop ecosystems.

Core Paradigms of PySpark

- Resilient Distributed Datasets (RDDs): The foundational logic of Spark. They are immutable distributed collections of data chunks partitioned automatically across your cluster.

- DataFrames and SQL: With later distributions, PySpark integrated DataFrames providing a highly optimized schema-aware system identical to querying SQL tables.

- Lazy Evaluation: PySpark does not execute a lineage until a defining action (like

.show()or.write()) is triggered. This allows it to optimize the physical execution plan globally before touching a single byte of memory.

“A robust engine isn’t judged by how much it can compute on a massive server, but how efficiently it dictates parallel threads across dozens of small ones.”

Demlon actively deploys PySpark logic via EMR and Cloud-Native ingestion networks to extract unparalleled speeds when mining enterprise-grade relational schemas. If your pipeline is blocking critical bi-weekly analytical reports, distributed computing is the definitive answer.